Industrial use of GPUs (Graphics Processing Units)

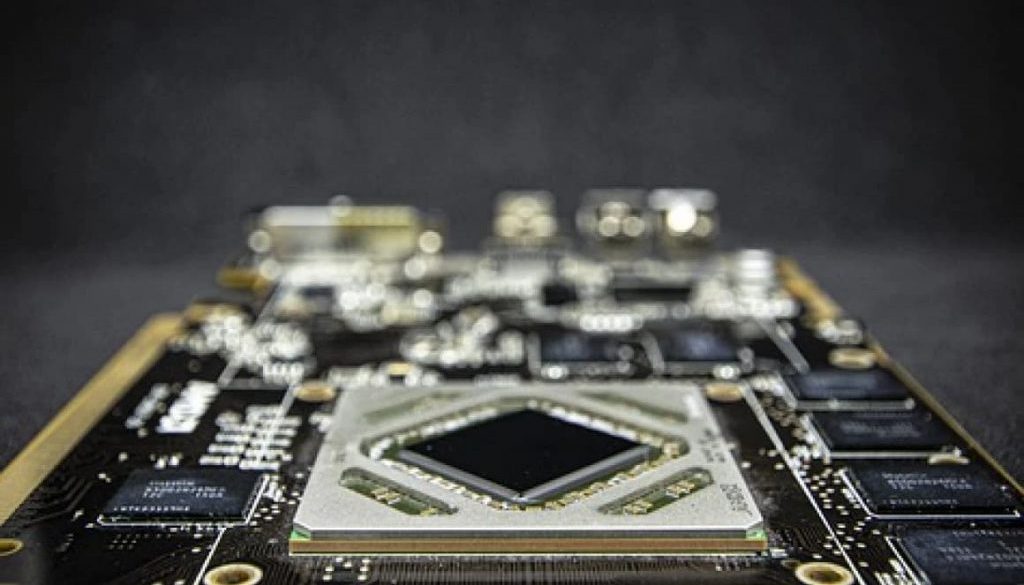

GPUs (Graphics Processing Units) were born due to the need to quickly manage the interface currently displayed on computers. As time goes by its use was extended to graphic design programs, video games, image processing,… But in this article we will focus on the capacity for numerical processing and artificial intelligence.

GPUs could be understood as a large number of small units capable of doing simple calculations simultaneously. This, although it seems simple, gives them exceptional processing power, it is not the same to do 1000 multiplications one after another than to do them all at once.

This capacity allowed the development of the first AIs and, in general, to introduce GPUs in research projects. Some examples would be the complex fluids simulations, calculations related to quantum chemistry, paralyzing calculations on large amounts of data…

Today, more and more companies choose to use GPUs in their systems, without going too far, facial recognition applications that detect faces on our smartphones use their small GPU, or the self-labeling of Facebook images would be two examples.

At INLOC Robotics we are not far behind in this field, we have our own AI within the SewDef system and we implement parts of our algorithms on GPUs to optimize them to a great extent. This opens the door to the increasingly frequent use of this hardware in industry.

Now let’s consider, once the first IoT systems are implemented, these can be increased exponentially by adding more data to the system. More computing power is likely to be required, which in turn allows for more elaborate studies of the production. The way forward would be the use of GPUs, in order to maintain the real-time monitoring that characterizes the IoT.

As a practical example, we can use the case of IoT. In many companies the use of this system is being implemented to monitor production in real time.

Breaking weaknesses:

When it comes to GPUs for general use, we can see two major weaknesses. First of all, GPUs were not initially designed with this use in mind, causing a program designed for a GPU to not work with the same performance on the next generation of the same hardware.

Another recurring problem is the lack of precision in calculations with decimals. To increase speeds, the precision in the same hardware is usually reduced, this fact is not a problem for all applications, but it could be.

However, the growing demand from the research sector of GPUs has made manufacturers focus efforts on solving these weaknesses, to the point of reducing them enough so that they are already used today in a wide variety of devices and/or applications.

In my opinion, we have reached a turning point where GPUs will have a strong entry in the industrial sector solving very diverse problems.

We hope this article has been useful to you. If you have an engineering project in your hands and you think we can help you, here is the link where you can contact us and explain more about it.